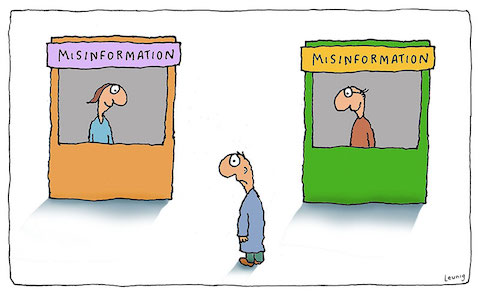

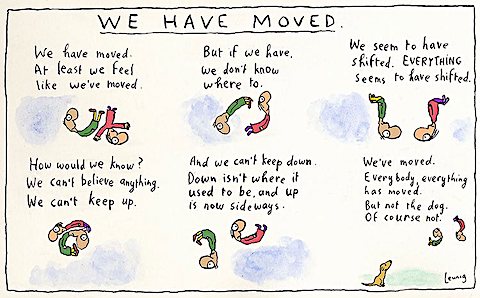

cartoon by Michael Leunig

When I look back at what the world seemed to be like, in my youth in the 1960s, it sometimes seems as if nothing has changed at all. We’re still fighting the same battles, supporting and confronting the same ideologies and misconceptions. In other ways, everything seems different now — the amount of information and misinformation available is vastly greater, but the ignorance and incapacity of most people across the political spectrum also seems paradoxically greater. And the overall level of competence, especially among people in ‘management’ and positions of power, seems to have unarguably declined enormously. The bozos are now driving the bus.

This perhaps, is what inevitably happens when a system gets overly large and unwieldy. It just stops working properly. And then it stops working entirely.

COLLAPSE WATCH

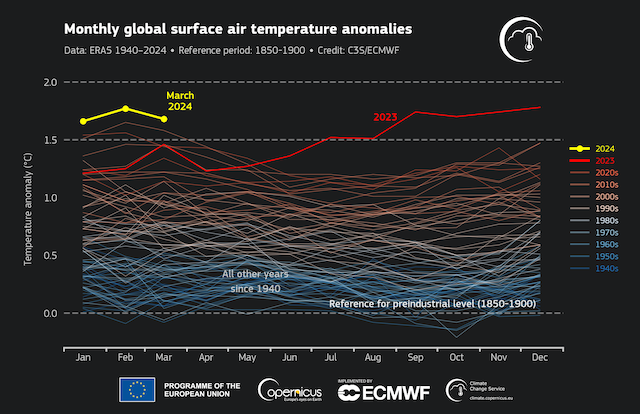

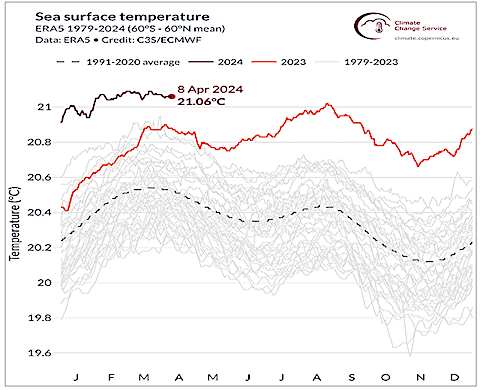

the latest from Copernicus: Bye-bye 1.5; 2.0º here we come!

Why is collapse surprising us?: Tim Morgan re-summarizes the latest evidence of everything falling apart, despite a still-prevailing absurd belief that something is going to magically save us.

Running out of fresh water: Andrew Nikiforuk reviews our globally rapidly-diminishing supply of fresh water.

More people than we thought: A new report shows that the UN and other population projection agencies have, yet again, been overly complacent and overly optimistic in predicting a levelling off of human population growth. Thanks to Richard Heinberg for the link.

Climate migration is not just immigration: A new book suggests that many of the people desperately migrating to new homes in the US will be coming from elsewhere in the country, trying to escape domestic ecological collapse.

So you thought hydro power was “renewable”: In light of a staggering record-low snowpack, BC Hydro is preparing to import even more hydrocarbon-based energy from the US than it has during the last few drought-heavy years.

When trees become carbon-emitters: Erik Michaels demolishes the dream that “regenerative agriculture” can play a role in abating climate collapse. And in another article he describes facing the reality of the inevitability of collapse (and mentions yours truly).

We can’t even get close to ‘there’ from here: Tim Watkins thoroughly deconstructs the utterly impossible targets the UK has set for shifting to “renewable” energy.

This is what social collapse looks like: Haiti is a failed state, now controlled by oligarchs, domestic and foreign armies, and gangs. Listen to get a taste of what life will be like everywhere if economic and political collapse leads to social collapse.

LIVING BETTER

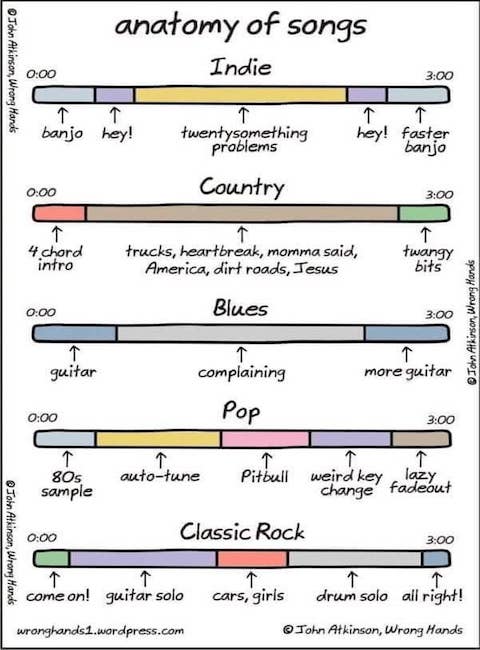

graphic by John Atkinson from the memebrary

When the only measure of value is commercial profit: A short essay by Caitlin Johnstone about being aware that what we’re being asked to take into our bodies, our brains, and our homes, is mostly not being done for our welfare; more and more, it’s up to us to look after that.

The benefits of human composting: Another way of giving back to the earth at the end of your life.

Challenging a sacred sports myth: Sports columnist Andrew Berkshire has been absolutely excoriated for daring to suggest that often extraordinary sports accomplishments are possible only for those with exceptional social privilege, and that the myth that “anyone can accomplish anything” if they only work hard at it, is wrong, and often cruel.

Taking care: An astonishing cartoon from Matthew Inman at The Oatmeal on dealing with grief, based on a poem by Callista Buchen. Thanks to Hank and John Green for the link.

Not buying the hate: Independent Jewish Voices rejects the ongoing Israeli genocide and calls for an immediate ceasefire and just peace in Israel-Palestine. Thanks to Sharon Goldberg for the link.

POLITICS AND ECONOMICS AS USUAL

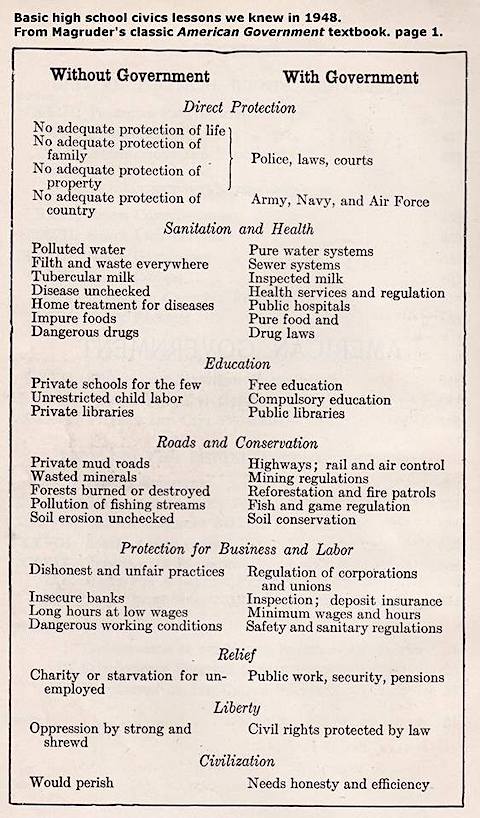

this is also from the memebrary; good thing we no longer have to worry about the things on the left!

Corpocracy, Imperialism & Fascism: Short takes (thanks to John Whiting for many of these):

- How the genocide and slaughter of Palestinians is likely to be remembered in the history books

- Israel’s bombing of the Iranian embassy in Syria appears designed to pull Hezbollah and Syrian (and possibly Iranian) forces into Israel’s genocidal war, so that Biden will have no choice but to join the war

- US Republicans are demanding that the US do even more to support the atrocities in Palestine than what Genocide Joe has done

- The Australian military has refused to honour a freedom-of-information request to disclose its contract with the Israeli government, claiming that disclosure would ruin its “international reputation”

- How Biden is cannibalizing the federal government to fund the Pentagon’s wars

- Biden provides a special “donation” to Africa’s cruelest dictator

- The Empire amps up its losing fight against multipolarity

- How the Alberta government and its oil industry poisoned and destroyed a First Nation

- In case you’ve already forgotten about the bizarre and unexplained Crocus massacre in Moscow

- Maersk, the owner of the ship that destroyed Baltimore’s biggest bridge, has a long history of safety violations

- Argentina’s new extreme right-wing pro-austerity government, having already wrecked the country’s economy, is now denying that the brutal disappearance of 30,000 leftist Argentinians under a previous military junta ever happened

- BC’s government has hired an external private consulting firm to recommend a strategy for managing the province’s long-term care homes, worrying many that it’s the first step to more privatization, despite the ghastly record of the province’s for-profit private health care facilities

- BC’s government has also just hastily approved a new jetty to handle LNG exports, ignoring warnings about its horrific environmental risks

- And in a BC trifecta, a woman has been charged with embezzling, undetected for years, nearly $2M from the Alacrity Foundation of BC, itself the subject of accusations of corruption and mismanagement

- Biden and Trudeau are both lobbying to overturn a court ruling blocking the operation of a pipeline that runs through First Nations land and extremely sensitive ecologies

- Private equity, one of the worst scourges of untrammelled capitalism, having devastated many industries from health care to education (and destroyed the Body Shop), now has its sights on accounting firms.

- Sabine Hossenfelder explains why she gave up on the patriarchal and byzantine academic research establishment, which is now utterly preoccupied with getting grants rather than contributing anything to new knowledge and science

- The culture wars destroying NPR, from the perspective of a conservative

- And kind of on a related note, a U of Florida prof poignantly describes the damage that DeSantis’ culture war against “liberal” universities has wrought (thanks to David Parkinson for the link)

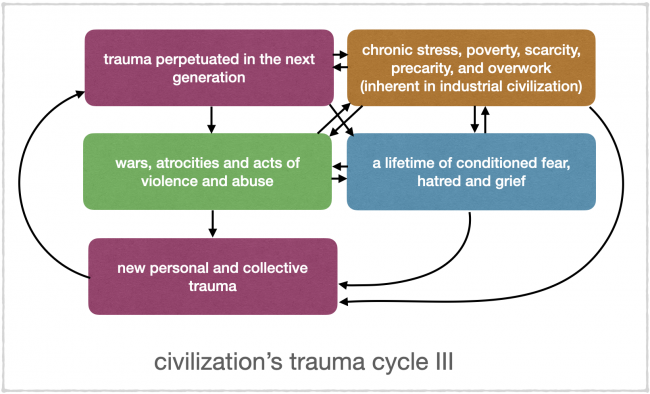

- To understand the Ukraine War, you have to understand the multi-generational forces of fear, hatred and trauma that have played on all sides

- RFK Jr’s New York state campaign director recently gave a speech “confessing” that her candidate’s campaign is ultimately about delivering the presidency to Trump

- And, just in, both Democrats and Republicans approved of the extension and expansion of the US Government’s (and FBI’s) warrantless spying and surveillance program of Americans and visitors; feel safer now?

Propaganda, Censorship, Misinformation and Disinformation: Short takes:

- Bill Astore explains what happens to a journalist in the mainstream press who dares take a neutral or pro-Palestinian position on conflict in the Mideast

- Half of Americans cannot correctly answer a question about whether more Israelis or more Palestinians have died in the current war; way to go, media!

- The Naked Capitalism site has been threatened with demonetization because a (clearly unmonitored, faulty) AI bot ‘determined’ that some of its posts were “misinformation”

FUN AND INSPIRATION

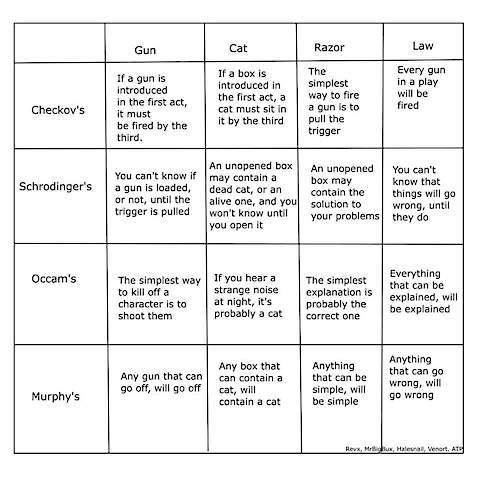

again from the memebrary; I think this is very clever, so if anyone can decode the reference bottom right and identify the original author, I’d love to know who it was

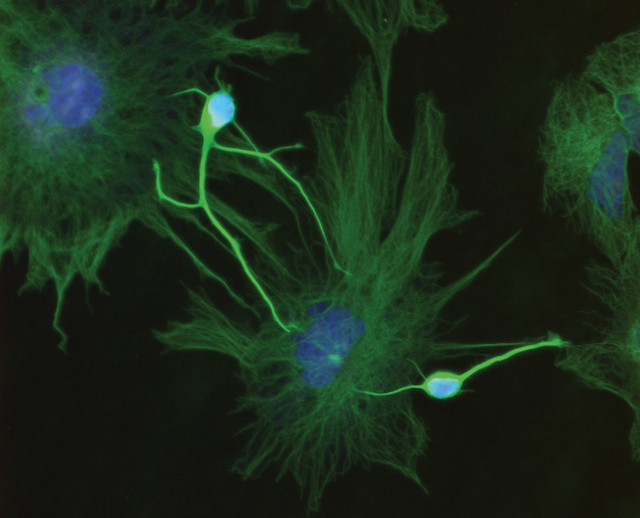

Cloning your beloved deceased pet: Ordering clones from the genetic matter of a dead animal is expensive, risky, and exposes the ‘clones’ to genetic abnormalities. Is this new technology truly an ‘advance’, or is it, like the $500,000/year pills for rare and specialized diseases, an indulgence for the rich and powerful at the expense of everyone else? Especially in a world with so many pets waiting for adoption. And how soon will people want clones of their dead human relatives?

Headlines from The Beaverton (the Canadian equivalent to The Onion; ask a Canadian to explain the humour):

- Media promise to start covering Pierre Poilievre’s transphobic comments as soon as they finish 50th story on how Liberals are unpopular

- In honour of Mulroney, national funeral to be privatized

- Financial planner recommends having more money

- To combat self-checkout theft, stores experimenting with new human cashier pilot program

- “Law & Order Toronto” program criticized as unrealistic after showing Toronto police actually trying to solve crimes

- “If only someone had the power to make corporations behave better,” laments prime minister

- OJ Simpson dies surrounded by family members he didn’t murder

- US Supreme Court rules woman’s life ends at conception

- Study: Best way to get rid of a body is to check it as luggage with Air Canada

More Lari Basilio: The amazing craft of a Brasilian maestro.

The Fourth Turning: End Times?: A review of Peter Turchin’s latest book on the long arcs of civilizations and their castes.

Western pop music 2023 in one song: The latest mashup by DJ Earworm. Clever, fun, and way better than the rather unimaginative individual songs it incorporates.

The demise of quality education: For those who checked out the Sabine Hossenfelder, NPR and DeSantis links above, Aurélien puts it all into a fall-of-the-PMC (Professional Managerial Caste) cultural context.

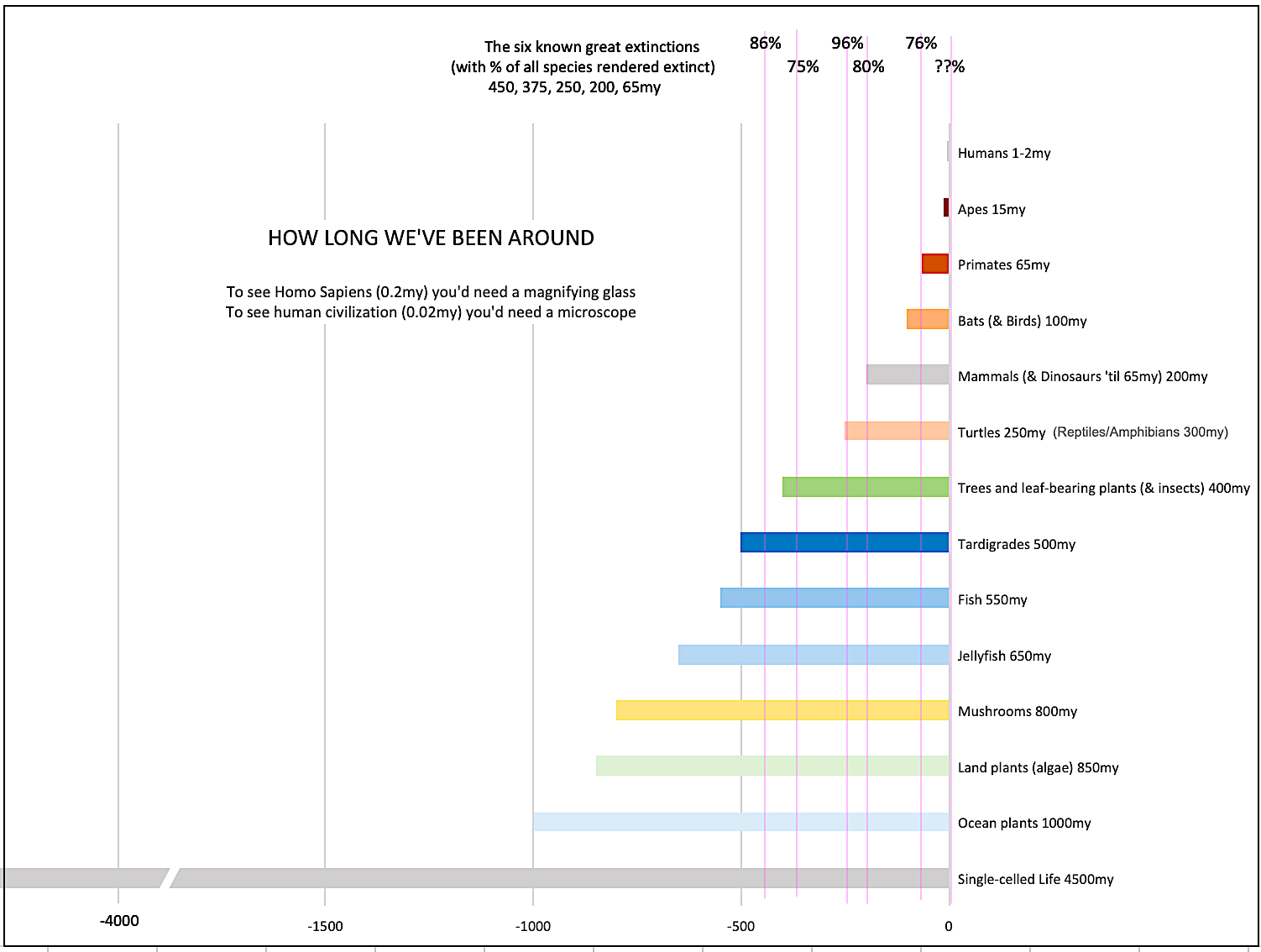

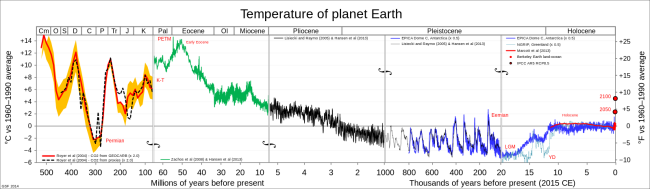

Has global warming happened before?: How the bloom of an arctic fern during the Cambrian Explosion (14ºC above recent average) may have prevented runaway Hothouse Earth and precipitated the ice age(s) that followed.

Sudoku Land: Also via Hank and John, it’s Sudoku, but, uh, with land.

Tardigrades!: And here’s Hank talking about our favourite mysterious tiny animal.

THOUGHTS OF THE MONTH

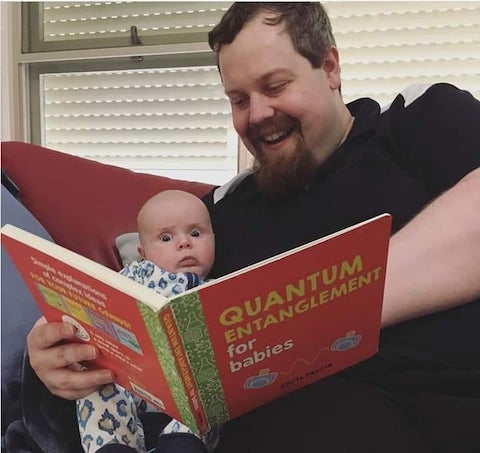

yes, this is a real book in a series by Chris Ferrie; reading to your kids is important

From Aaron Bushnell, the self-immolating anti-war-in-Gaza protester, on imagining (thanks to Caitlin Johnstone for the link):

I’ve realized that a lot of the difference between me and my less radical friends is that they are less capable of imagining a better world than I am. I follow YouTubers like Andrewism that fill my head with concrete images of free, post-scarcity communities and it makes me so much more prepared to reject things about the current world, because I’ve imagined how things could be and that helps me see how extremely bullshit things are right now.

What I’m trying to say is, it’s so important to imagine a better world. Let your thoughts run wild with idealistic dreams of what the world should look like, and let the pain and anger at how it’s not that way flow through you. Let it free your mind and fuel your rage against the machine.

It’s not too late for you or anyone. We can have the world of our dreams tomorrow, but we have to be willing to fight today.

From Callista Buchen, who inspired the Oatmeal cartoon linked above, in the DMQ:

PRETEND

For a while, my daughter worried about a catastrophic hole in the ground wherever we were going. Mom, what if the library is just a giant hole? What if the cereal aisle is a big hole? She imagined canyons replacing each familiar landmark. At every intersection, every turn I promised her there would no hole. She’d plead: But what will we do? You’ll see, I would say, everything will be fine. When she stopped asking, I grieved her lost worry like the death of an imaginary friend, but since she’s first stacked the blocks in the living room, she’s understood that what we build we can crash.

Anything can go boom, she says now to her little brother, who wants the tower higher, higher still. Mom will hold it, she tells him. She pauses and adds, for now.